date: 2026-03-16 readtime: 7 description: Why the shape of normal behavior is one of the best early warning signals on Windows and how vector baselines detect it. categories: [Detection, Baseliner]

Vector baselines and host behavior

Most security monitoring focuses on what happened, such as a failed login, a new admin, or a suspicious process. That approach is useful, but many strong warning signs do not come from one event. They come from a change in how a host behaves over time. When normal behavior starts to shift, that is often where analysts first see a real problem forming.

Vector baselines solve this by learning the usual shape of activity for each host and then detecting when that shape changes. Instead of writing and tuning many static thresholds, you let the system learn what is typical for each machine. The key question becomes simple and practical: Does this hour look like what this host usually does?

The idea in one sentence

Instead of watching isolated events, we learn what normal behavior looks like for each host by hour and by day type, and then we raise a signal when current behavior is statistically far from that learned normal, even when no single event looks obviously malicious.

Why "shape" matters

Every Windows machine produces a stream of event codes for logons, process creation, service activity, and network-related operations. Over time, each host develops a recognizable pattern. A workstation at Monday 10 AM usually behaves like previous Monday mornings, while Sunday night on the same workstation looks very different. This recurring profile acts like a behavioral fingerprint.

If that profile changes sharply in the same context, for example the same host and similar time slot, something meaningful may have changed as well. It can be malware execution, credential misuse, lateral movement, or an operational misconfiguration. Vector baselines turn this intuition into a measurable distance from expected behavior.

Vectors, not just counts

A single hourly count is too coarse because it cannot distinguish many logons from many process launches. Vector baselines use a vector where each dimension corresponds to an event code and each value represents how many times that code appeared in the hour. This gives a richer representation of activity. Different mixes produce different vector shapes, even when total volume is similar.

The system then derives a compact summary from the vector and learns the expected value and variability for each host in each time context. If the current value moves far enough away from that baseline, for example several standard deviations, the hour is marked as anomalous. In practice, this gives you a statistically grounded signal instead of a hard-coded threshold.

Workdays, weekends, holidays

Normal behavior is not one universal value. A workday morning, a weekend evening, and a holiday can all have different traffic patterns. For this reason, separate baselines are learned for workdays, weekends, and holidays. This significantly reduces false positives because the model compares behavior to the right context instead of forcing one global expectation.

In mature deployments, teams often split context one step further, such as: business hours versus off-hours, patch windows, and maintenance windows for server classes.

The more faithfully the context reflects real operations, the cleaner the anomaly signal becomes.

What you get in practice

In day-to-day operations, this approach gives each machine its own context-aware notion of normal activity. It removes much of the manual threshold tuning that usually slows down rule maintenance in large environments. It also gives analysts a simple interpretation layer. You can ask how unusual the current hour is, usually through a sigma or Z-score, and then decide how aggressively to respond.

It is important to treat vector baseline output as a high-quality signal, not as a final verdict. Teams usually get the best results when they combine this signal with other detections. For example, an unusual host behavior signal combined with suspicious authentication events typically gives much higher confidence than either signal alone.

How to interpret the signal

A useful way to operationalize the output is by severity bands. Low drift means behavior is slightly unusual and should be added to a watchlist. Moderate drift means the case should be triaged by an analyst in the normal queue. High drift means immediate enrichment and containment checks are appropriate.

This keeps response proportional to risk and prevents alert fatigue. It also creates a repeatable workflow where analysts know exactly what to do at each score level.

Typical analyst workflow

When a high anomaly score appears, analysts usually ask whether the host is in a known maintenance or deployment window and which event-code dimensions contributed most to the score. They also verify whether matching signals are present in authentication, EDR, or network telemetry, whether this is a one-hour spike or a sustained multi-hour pattern, and whether similar behavior appears on peer hosts with the same role.

That sequence quickly separates benign operational changes from likely security incidents.

Where it shines

Vector baselines are especially useful for compromised account scenarios, suspicious host behavior, and lateral movement where the activity mix changes before a clear signature appears. They are also useful for detecting misuse or malware behavior that alters service, process, or network patterns in subtle but persistent ways.

Another practical benefit is visibility into drift. Hosts can change role over time because of infrastructure changes, maintenance, or deployment updates. With baseline-based monitoring you can see that shift, investigate it, and then decide whether to adapt policies or keep alerting on the change.

Common pitfalls to avoid

A few implementation pitfalls show up often. Cold start effects can produce unstable scoring before enough history is collected. Role changes can make newly repurposed hosts look anomalous for legitimate reasons. Sparse telemetry can create artificial anomalies when logs are missing. Overly global settings, such as one threshold for all hosts, often reduce accuracy. Missing feedback loops can stall precision over time because analysts cannot label outcomes and improve the model behavior.

Designing with these constraints in mind improves both precision and analyst trust.

Takeaway

Vector baselines answer a practical question that analysts care about: Does this hour look like what this host usually does at this time? By learning expected behavior per host and per context, this method gives a lightweight and adaptive way to surface meaningful deviations, often earlier than single-event rules.

For full technical detail including configuration, weights, sigma thresholds, and integration into your detection pipeline, see Detecting User Activity Anomalies Using Event-Code Vector Baselines.

Most Recent Articles

You Might Be Interested in Reading These Articles

C-ITS: The European Commission is updating the list of the Root Certificates

23rd April 2021 marks the release of the fifth edition of the European Certificate Trust List (ECTL). This was released by the Joint Research Centre of the European Commission (EC JRC), and is used in Cooperative Intelligent Transport Systems (C-ITS). It is otherwise known as the L0 edition release, intended for use primarily in test and pilot deployments. Currently these activities are primarily European and focus on fields such as intelligent cars and road infrastructure.

press

automotive

c-its

v2x

security

Published on May 06, 2021

The 8th version of the European Certificate Trust List (ECTL) for C-ITS has been released

The Joint Research Centre of the European Commision (EC JRC) released the eight edition of the European Certificate Trust List (ECTL) used in Cooperative Intelligent Transport Systems (C-ITS). L0 ECTL v8 contains five new Root CA certificates and one re-keyed Root CA certificate. Three out of five newly inserted Root Certificates are installations that run on the TeskaLabs SeaCat PKI software for C-ITS.

press

automotive

c-its

v2x

security

Published on September 16, 2021

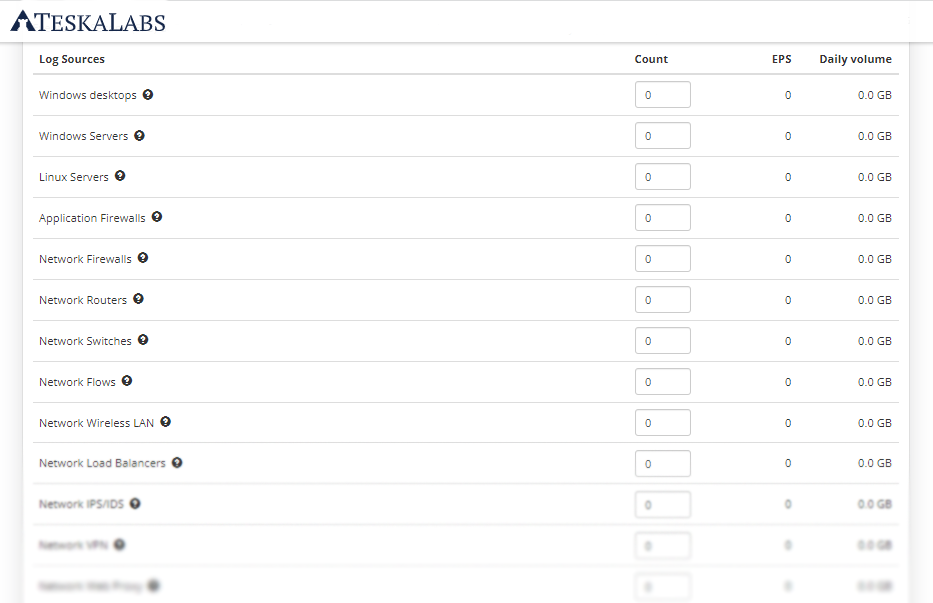

How big Log Management or SIEM solution does your organization need

Calculate size of IT infrastructure and how much EPS (Events Per Second) generates.

Published on December 15, 2021